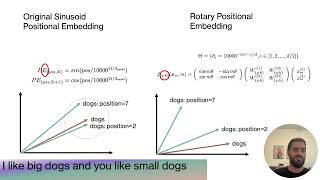

DeepLearning Hero

RoPE (Rotary positional embeddings) explained: The positional workhorse of modern LLMs

2 years ago - 14:06

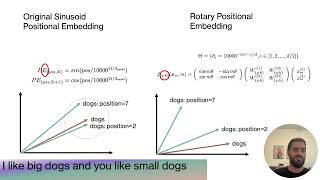

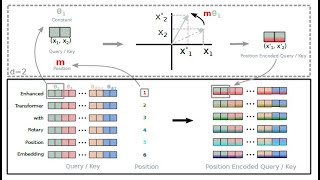

Efficient NLP

Rotary Positional Embeddings: Combining Absolute and Relative

2 years ago - 11:17

![How Rotary Position Embedding Supercharges Modern LLMs [RoPE]](/vi/SMBkImDWOyQ/mqdefault.jpg)

Jia-Bin Huang

How Rotary Position Embedding Supercharges Modern LLMs [RoPE]

1 year ago - 13:39

Outlier

Rotary Positional Embeddings Explained | Transformer

6 months ago - 20:28

Umar Jamil

LLaMA explained: KV-Cache, Rotary Positional Embedding, RMS Norm, Grouped Query Attention, SwiGLU

2 years ago - 1:10:55

ExplainingAI

Why Rotating Vectors Solves Positional Encoding in Transformers | Rotary Positional Embeddings(ROPE)

1 month ago - 23:06

Vuk Rosić

Rotary Positional Embeddings & Rotation Matrix + Python LLM code

1 year ago - 11:05

Vizuara

Rotary Positional Encodings | Explained Visually

10 months ago - 34:38

JakZee

Rotary Position Embedding explained deeply (w/ code)

1 year ago - 23:26

BrainDrain

How positional encoding works in transformers?

2 years ago - 5:36

Mr. Gyula Rabai

Large Language Models (LLM) - Part 5/16 - RoPE (Positional Encoding) in AI

1 year ago - 4:17

Zachary Huang

Give me 30 min, I will make RoPE click forever

2 months ago - 29:08

Keyur

DoPE: Denoising Rotary Position Embedding

1 month ago - 10:49

Discover AI

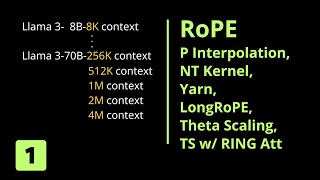

RoPE Rotary Position Embedding to 100K context length

1 year ago - 39:56

Gabriel Mongaras

RoFormer: Enhanced Transformer with Rotary Position Embedding Explained

2 years ago - 39:52

CodeEmporium

Why Sine & Cosine for Transformer Neural Networks

3 years ago - 0:51

CosmoX

DroPE Explained: Dynamic Rotary Position Embedding for Stable Long-Context LLM Inference

1 month ago - 7:34

Sciencing The Data

What Rotary Positional Embeddings (RoPE) don’t want you to know

4 months ago - 12:03

![[한글자막] RoPE (Rotary positional embeddings) explained: The positional workhorse of modern LLMs](/vi/JF4PYztNHcg/mqdefault.jpg)

WTF_Zone

[한글자막] RoPE (Rotary positional embeddings) explained: The positional workhorse of modern LLMs

2 years ago - 14:07

Pramod Goyal

Positional Encoding | How LLMs understand structure

1 year ago - 9:10

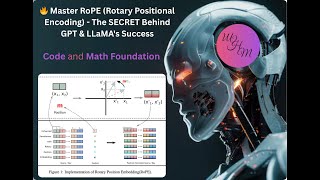

Mehdi Hosseini Moghadam

🔥 Master RoPE (Rotary Positional Encoding) - The SECRET Behind GPT & LLaMA's Success! Code and math

8 months ago - 14:37

Learn With Jay

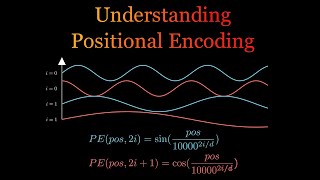

Positional Encoding in Transformers | Deep Learning

1 year ago - 25:54

Jonas Almeida

RoFormer: enhanced transformer with rotary position embedding

1 year ago - 10:23

Umar Jamil

Coding LLaMA 2 from scratch in PyTorch - KV Cache, Grouped Query Attention, Rotary PE, RMSNorm

2 years ago - 3:04:11

Stanford Online

Stanford XCS224U: NLU I Contextual Word Representations, Part 3: Positional Encoding I Spring 2023

2 years ago - 13:02

NLP programming labs

RoPE embeddings : Math explained + implementation from scratch in code

10 months ago - 50:44

![How Attention Got So Efficient [GQA/MLA/DSA]](/vi/Y-o545eYjXM/mqdefault.jpg)

Jia-Bin Huang

How Attention Got So Efficient [GQA/MLA/DSA]

3 months ago - 29:02

![[Paper Review] RoFormer: Enhanced Transformer with Rotary Position Embedding (RoPE)](/vi/2UAwBZLdjo8/mqdefault.jpg)

LOADING_

[Paper Review] RoFormer: Enhanced Transformer with Rotary Position Embedding (RoPE)

5 months ago - 7:13

AI Paper Slop

DoPE: Denoising Rotary Position Embedding (Nov 2025)

3 months ago - 13:41

Serrano.Academy

How do Transformer Models keep track of the order of words? Positional Encoding

1 year ago - 9:50

Marwan Elghitany

Rotary Positional Embedding (RoPE) Explained

2 months ago - 22:02

Arxflix

RoFormer: Transforming Transformers with Rotary Positional Embeddings

1 year ago - 3:34

![[AI Podcast] RoFormer: Enhanced Transformer with Rotary Position Embedding](/vi/PYRwUqNo8QA/mqdefault.jpg)

Listening to AI Papers

[AI Podcast] RoFormer: Enhanced Transformer with Rotary Position Embedding

6 months ago - 7:00

AI Papers

VideoRoPE Enhancing Video Rotary Position Embedding for LLMs

1 year ago - 14:28

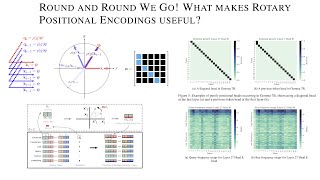

Gabriel Mongaras

Round and Round We Go! What makes Rotary Positional Encodings useful?

1 year ago - 32:31

Gradient Descending

Reading AI Research Paper | RoFormer: Enhanced Transformer with Rotary Position Embedding

Streamed 1 year ago - 1:52:22

Subhankar Ghosh

Rotary Positional Embeddings with code: Easy explanation, No mathematics

2 years ago - 35:01

Ofir Press

Language Models Explained: Position Embeddings, Extrapolation, and Perplexity Evaluation

2 years ago - 28:04

NPTEL IIT Delhi

Lec 16 | Introduction to Transformer: Positional Encoding and Layer Normalization

1 year ago - 1:26:53

Vizuara

Integer and Binary Positional Encodings | Journey towards Rotary Positional Encodings (RoPE)

10 months ago - 36:41

AI Paper Slop

Beyond Real: Imaginary Extension of Rotary Position Embeddings for Long-Context LLMs (Dec 2025)

2 months ago - 15:02

Md Mishfaq Ahmed

The secret sauce behind ROPE (Rotary Positional embedding)

11 months ago - 2:01

CampusX

Positional Encoding in Transformers | Deep Learning | CampusX

1 year ago - 1:13:15

ExplainingAI

Positional Encoding in Transformer | Sinusoidal Positional Encoding Explained

2 months ago - 20:34

![How Rotary Position Embedding Supercharges Modern LLMs [RoPE]](/vi/SMBkImDWOyQ/mqdefault.jpg)

![[한글자막] RoPE (Rotary positional embeddings) explained: The positional workhorse of modern LLMs](/vi/JF4PYztNHcg/mqdefault.jpg)

![How Attention Got So Efficient [GQA/MLA/DSA]](/vi/Y-o545eYjXM/mqdefault.jpg)

![[Paper Review] RoFormer: Enhanced Transformer with Rotary Position Embedding (RoPE)](/vi/2UAwBZLdjo8/mqdefault.jpg)

![[AI Podcast] RoFormer: Enhanced Transformer with Rotary Position Embedding](/vi/PYRwUqNo8QA/mqdefault.jpg)